Machine Learning Trick of the Day (7): Density Ratio Trick

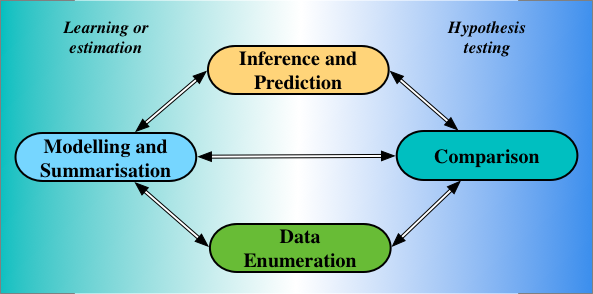

· Read in · 1481 words · All posts in series · [dropcap]A[/dropcap] probability on its own is often an uninteresting thing. But when we can compare probabilities, that is when their full splendour is revealed. By comparing probabilities we … Continue reading Machine Learning Trick of the Day (7): Density Ratio Trick