We now routinely build complex, highly-parameterised models in an effort to address the complexities of modern data sets. We design our models so that they have enough 'capacity', and this is now second nature to us using the layer-wise design principles of deep learning. But some problems continue to affect us, those that we encountered even in the low-data regime, the problem of overfitting and seeking better generalisation.

The classical description of deep feedforward networks in part I or of recurrent networks in part IV established maximum likelihood as the the underlying estimation principle for these models. Maximum Likelihood (ML) [cite key=le1990maximum] is an elegant, conceptually simple and easy to implement estimation framework. And it has several statistical advantages, including consistency and asymptotic efficiency. Deep learning has shown just how effective ML can be. But it is not without its disadvantages, the most prominent being a tendency for overfitting. Overfitting is the problem of all statistical sciences, and ways of dealing with this are abound. The general solution reduces to considering an estimation framework other than maximum likelihood — this penultimate post explores some of the available alternatives.

Regularisers and Priors

The principle technique for addressing overfitting in deep learning is by regularisation — adding additional penalties to our training objective that prevents the model parameters from becoming large and from fitting to the idiosyncrasies of the training data. This transforms our estimation framework from maximum likelihood into a maximum penalised likelihood, or more commonly maximum a posteriori (MAP) estimation (or a shrinkage estimator). For a deep model with loss function

![]()

and parameters

![]()

, we instead use the modified loss that includes a regularisation function

![]()

:

![]()

![]()

is a regularisation coefficient that is a hyperparameter of the model. It is also commonly known that this formulation can be derived by considering a probabilistic model that instead of a penalty, introduces a prior probability distribution over the parameters. The loss function is the negative of the log joint probability distribution:

![]()

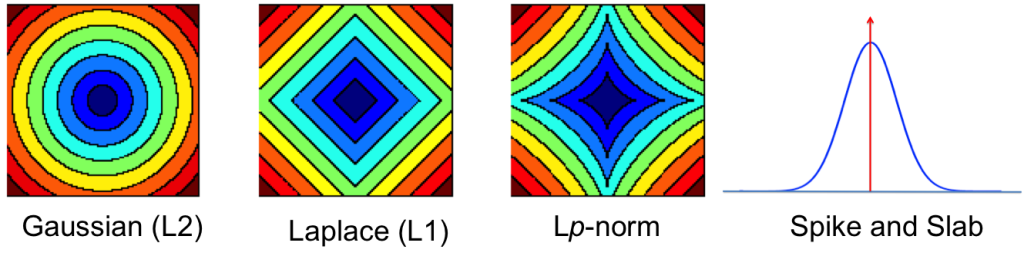

The table shows some common regularisers, of which the L1 and L2 penalties are used in deep learning. Most other regularisers in the probabilistic literature cannot be added as a simple penalty function, but are instead given by a hierarchical specification (and whose optimisation is also more involved, requiring some form of alternating optimisation). Amongst the most effective are the sparsity inducing penalties such as Automatic Relevance Determination, the Normal-Inverse Gaussian, the Horseshoe, and the general class of Gaussian scale-mixtures.

[table]

Name,

![]()

,

![]()

L2/Gaussian/Weight Decay,

![]()

,

![]()

![]()

,

![]()

![]()

,

![]()

![]()

, ""

![]()

, ""

![]()

,

![]()

[/table]

Invariant MAP Estimators

While these regularisers may prevent overfitting to some extent, the underlying estimator still has a number of disadvantages. One of these is that MAP estimators are not invariant to smooth reparameterisations of the model. MAP estimators reason only using the density of the posterior distribution on parameters and their solution thus depends arbitrarily on the units of measurement we use. The effect of this is that we get very different gradients depending on our units, with different scaling and behaviour that impacts our optimisation. The most general way of addressing this is to reason about the entire distribution on parameters instead. Another approach is to design an invariant MAP estimator [cite key=druilhet2007invariant], where we instead maximise the modified probabilistic model:

![]()

where

![]()

is the Fisher information matrix. It is the introduction of the Fisher information that gives us the transformation invariance, although using this objective is not practically feasible (requiring up to 3rd order derivatives). But this is an important realisation that highlights an important property we seek in our estimators. Inspired by this, we can use the Fisher information in other ways to obtain invariant estimators (and better-behaved gradients). This builds the link to, and highlights the importance of the natural gradient in deep learning, and the intuition and use of the minimum message length from information theory [cite key =jermyn2005invariant].

Dropout: With and Without Inference

Since the L2 regularisation corresponds to a Gaussian prior assumption on the parameters, this induces a Gaussian distribution on the hidden variables of a deep network. It is thus equally valid to introduce regularisation on the hidden variables directly. This is what dropout [cite key=srivastava2014dropout], one of the major innovations in deep learning, uses to great effect. Dropout also moves a bit further away from MAP estimation and closer to a Bayesian statistical approach by using randomness and averaging to provide robustness.

Consider an arbitrary linear transformation layer of a deep network with link/activation function

![]()

, input

![]()

, parameters

![]()

and the dimensionality of the hidden variable D. Rather than describing the computation as a warped linear transformation, dropout uses a modified probabilistic description. For i=1, ... D, we have two types of dropout:

![]()

![]()

![]()

In the Bernoulli case, we draw a 1/0 indicator for every variable in the hidden layer and include the variable in the computation if it is 1 and drop it out otherwise. The hidden units are now random and we typically call such variables latent variables. Dropout introduces sparsity into the latent variables, which in recent times has been the subject of intense focus in machine learning and an important way to regularise models. A feature of dropout is that it assumes that the the dropout (or sparsity probability) is always known and fixed for the training period. This makes it simple to use and has shown to provide an invaluable form of regularisation.

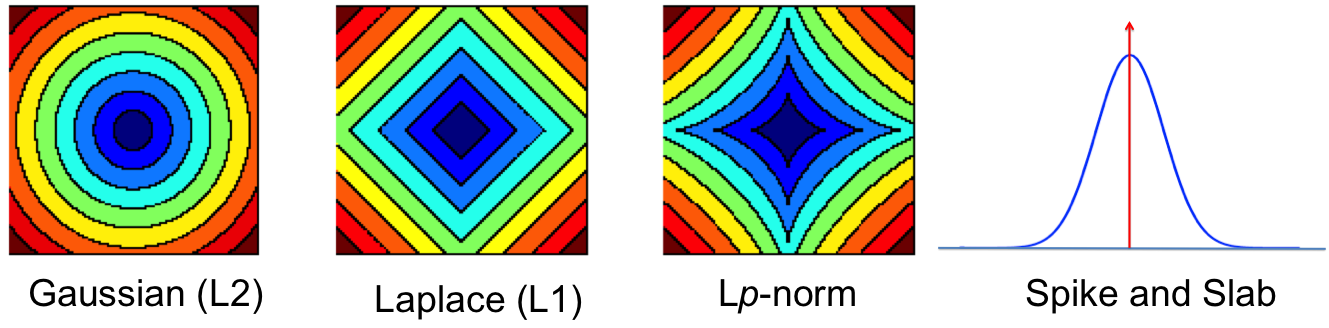

You can view the indicator variables

![]()

as a way of selecting which of the hidden features are important for computation of the current data point. It is natural to assume that the best subset of hidden features is different for every data point and that we should find and use the best subset during computation. This is the default viewpoint in probabilistic modelling, and when we make this assumption the dropout description above corresponds to an equally important tool in probabilistic modelling — that of models with spike-and-slab priors [cite key=ishwaran2005spike]. A corresponding spike-and-slab-based model, where the indicator variables are called the spikes and the hidden units, the slabs, would be:

![]()

We can apply spike-and-slab priors flexibly: it can be applied to individual hidden variables, to groups of variables, or to entire layers. In this formulation, we must now infer the sparsity probability

![]()

— this is the hard problem dropout sought to avoid by assuming that the probability is always known. Nevertheless, there has been much work in the use of models with spike-and-slab priors and their inference, showing that these can be better than competing approaches [cite key=mohamed2012bayesian]. But an efficient mechanism for large-scale computation remains elusive.

Summary

The search for more efficient parameter estimation and ways to overcome overfitting leads us to ask fundamental statistical questions about our models and of our chosen approaches for learning. The popular maximum likelihood estimation has the desirable consistency properties, but is prone to overfitting. To overcome this we moved to MAP estimation that help to some extent, but its limitations such as lack of transformation invariance leads to scale and gradient sensitivities that we can seek to ameliorate by incorporating the Fisher information into our models. We could also try other probabilistic regularisers whose unknown distribution we must average over. Dropout is one way of achieving this without dealing with the problem of inference, but were we to consider inference, we would happily use spike-and-slab priors. Ideally, we would combine all types of regularisation mechanisms, those that penalise both the weights and activations, assume they are random and that average over their unknown configuration. There are many diverse views on this issue; all point to the important research still to do.

[bibsource file=http://www.shakirm.com/blog-bib/SVDL5.bib]

One thought on “A Statistical View of Deep Learning (V): Generalisation and Regularisation”